Overview

4 new target variations are being released on Numerai. There are 20D and 60D versions of each, for a total of 8 new targets. They will be released in the v4.1 dataset starting with the round opening on April 18.

One of them, target Cyrus, will become the official target used for payouts in one month, beginning with the round opening on May 13.

Along with this change, we are also implementing a change in the way correlation is calculated. This change weights your lowest and highest predictions more, and it is called Numerai Corr.

Models trained on Nomi still perform fairly well on this new score, but we do expect models trained on the newer targets to be a bit better.

Signals has no new targets released, but it will begin using the Numerai Corr variation of correlation for all scores.

New Correlation

When Numerai builds portfolios out of the Meta Model, a user’s highest and lowest predictions impact the Meta Model significantly more, and ultimately are more likely to make it into the portfolio. For this reason, in addition to looking at your model’s performance across all of its predictions, it’s important to also pay attention to the performance of the most extreme predictions.

We’ve previously suggested using something like “the correlation of your top and bottom 200 predictions” in order to make sure your predictions are also good in the extremes.

Improving on this idea, we’ve made a new correlation function which does the following:

- Rank your predictions

- Gaussianize your ranked predictions

- Raise those to the 1.5 power

- Transform target to be between -2 and 2 instead of 0 and 1

- Raise the target to the 1.5 power

- Take the Pearson correlation between the resulting predictions and target

def numerai_corr(preds, target):

# rank (keeping ties) then Gaussianize predictions to standardize prediction distributions

ranked_preds = (preds.rank(method="average").values - 0.5) / preds.count()

gauss_ranked_preds = stats.norm.ppf(ranked_preds)

# make targets centered around 0.

centered_target = target - target.mean()

# raise both preds and target to the power of 1.5 to accentuate the tails

preds_p15 = np.sign(gauss_ranked_preds) * np.abs(gauss_ranked_preds) ** 1.5

target_p15 = np.sign(centered_target) * np.abs(centered_target) ** 1.5

# finally return the Pearson correlation

return np.corrcoef(preds_p15, target_p15)[0, 1]

The result is that as with Spearman correlation, you still don’t need to worry about the distribution of your submissions, only the rank ordering. However the tails are now emphasized more than in a Spearman correlation.

This correlation, when applied to every score, is more similar to TC than the Spearman version of the same scores, indicating that these tails really have been under-emphasized before now.

Here’s the before and after for Numerai scores:

And the before and after for Signals scores:

Both Numerai and Numerai Signals will move all non-payout scores to use Numerai Corr immediately, while the payout scores (CORR20) will switch to Numerai Corr soon. For Numerai, payouts will switch to CORR20V2 on May 13. For Signals, payouts will switch to FNCV4 on June 3.

New Targets

Cyrus, Caroline, Sam, and Xerxes are four new targets which are all similar, with small variations. They will be included in the v4 and v4.1 datasets beginning on April 18.

Cyrus is our best target, and will become the CORR20 payout target in one month. The other three will not be used for payouts, but you might find that they are useful for training your models to be good at predicting Cyrus.

The “target” column in the datasets will also contain values for target_cyrus_20 starting on May 13.

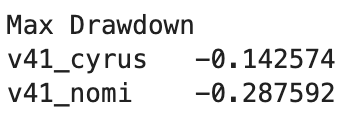

Below is a comparison between a model trained on Nomi scored with the current CORR20 (Spearman correlation with Nomi) and a model trained on Cyrus scored with the new Numerai Corr with Cyrus.

The mean score is about the same, but the consistency of the new model with the new scoring method is vastly improved.

Here’s a model built on target Nomi vs a model built on target Cyrus, both scored with Spearman on Nomi.

So even before the change to the definition of CORR20, switching to target Cyrus for training your models is beneficial, especially in terms of consistency.

Here we have an assortment of models on the new scoring.

All of the targets have something to offer and we hope that you use many of them for your ensembles. By themselves however, the newer targets tend to outclass Nomi.

Website changes

CORR20V2 is the temporary name for the new Numerai Corr Cyrus score. It has been added to the compare scores page so you can see how your existing models would be affected by the change:

On May 13 we will remove the existing CORR20, and CORR20V2 will become known simply as CORR20, and payouts will switch over to this new measure of CORR20.

Existing stakes will automatically switch to this new CORR20 for the round opening on May 13, so there’s no action required.

For Signals, CORR20V2 uses the same Signals target as the current CORR20, except it uses the new Numerai Corr instead of Spearman. Models will have their stakes transitioned to FNCV4 on Numerai Corr starting with the round opening on June 3.

Happy modeling