original Tweet thread on this topic

Project 1

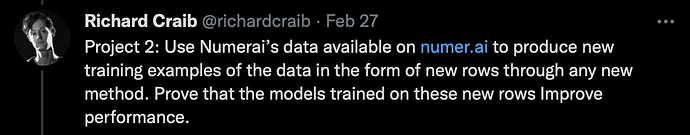

Project 2

Discussion on Methods

From our first Twitter Spaces discussion today, @jrb recommended Contrastive Self-Supervised Learning worked well for him for this project or creating new features.

Principal Components Analysis would also be a very basic way to generate unsupervised features like this. The goal is to make these new features maximally helpful for some later model to train on and I don’t think PCA works especially well but haven’t checked in a while on the current data.

Another method discussed was Diffusion Models. These models would take in a very noisey version of an era matrix and output another matrix which looks more like a real era. This models have had excellent results generating realistic images from noise.

Diffusion Models Paper

We also discussed how the solution to the Jane Street Competition on Kaggle involved using an auto encoder to create new features. See also the excellent thread on this with @jrai on Numerai’s forum.

Discussion where this fits with Numerai

If Numerai could create excellent new features or eras from these methods we could potentially make them available to everyone as features in our Data API. We could also potentially learn the new synthetic features or eras on our raw data which could be even more useful. Ultimately, giving out more features and more data to Numerai users using these methods could improve everyone’s models significantly.

Projects

If you want to work on these projects, then describe how you plan to solve them in a reply to this blog post. Code snippets will be useful. If have gotten to the point of demonstrating the success of your method, I would be happy to get on a call 1-1 with you to take a look. But first try to convince me here on the forum that it’s good and ready for me to criticize. We do want to share this research publicly so anyone can use. It might even make it into an example script some day. We use PyTorch a lot, I think it would be best if you could use that if you know it but we’re not super strict.

Prizes

I am hoping to make rapid progress on this this month in March. If you have something good, Numerai will get you flights and hotel to come present it to everyone at NumerCon on April 1 in SF and will also give large retro-active bounty if its especially good and our chief scientist Michael Oliver would also want to interview you for a full time job in research at Numerai.